AI is moving from experimentation to large-scale deployment as newsrooms shift from testing individual tools to incorporating AI into their editorial and business workflows, says Ezra Eeman, lead of WAN-IFRA’s AI in Media initiative.

The shift reflects the speed at which generative AI has moved into mainstream use. ChatGPT now has more than 900 million weekly users and processes roughly 2.5 billion prompts every day.

Usage is also expanding beyond early adopters. In the UK, adoption among users aged 45 and older grew by more than 220 percent last year. Gen AI apps are projected to rank among the five most-used categories by time spent, alongside streaming platforms, social media, and games.

Speaking at our recent AI in Media Forum 2026 in Bangalore, Ezra Eeman, AI expert and director of strategy & innovation at NPO in the Netherlands, and lead of WAN-IFRA’s AI in Media initiative, said the conversation in newsrooms is already shifting.

“The question is no longer whether we should explore AI, but whether we are ready to operate it at scale in newsrooms,” he said.

“Hundreds of millions of people use these tools daily. That changes how audiences find information, interact with media, and how newsrooms operate.”

AI moves from experimentation to everyday newsroom tools

Inside news organisations, AI is increasingly becoming part of journalists’ daily workflows.

Most adoption still revolves around simple tools that streamline tasks rather than replace editorial work. In the UK, 56 percent of journalists use AI at least weekly.

Many of the tools currently in use are practical productivity aids, often developed internally by publishers, Eeman noted. These include transcription services such as GoodTape, and newsroom-built tools that help turn reporting into social media assets or visualisations.

“The promise was that AI would take over repetitive tasks and give journalists more time for creative work,” he said. “What we see in reality is that these systems still require prompting, checking, editing, and verification. In many cases they introduce new steps in the workflow rather than removing them.”

Newsrooms begin experimenting with AI agents

While most newsroom AI tools remain relatively simple, some organisations are beginning to experiment with systems that automate multi-step workflows.

These “agents” can perform a sequence of tasks – retrieving assets, editing text or video, or preparing content for distribution – with limited human intervention. Instead of responding to a single prompt, they coordinate several processes.

An example Eeman cited is the Japanese company TNL Media Genie, which is developing what it describes as an “agentic newsroom,” where AI systems manage parts of the production workflow.

Another example comes from the European publisher Mediahuis, where teams have experimented with agents capable of drafting stories, editing text, conducting fact checks, and performing legal checks before a human editor reviews the output.

Despite these developments, Eeman cautioned that fully autonomous systems remain unreliable.

“Real autonomy, for now, is still very much an illusion,” he said. “These systems tend to optimise for very specific goals, but they struggle when they need broader editorial judgement or contextual understanding. That is why human oversight remains essential in newsroom use.”

From ‘adding AI to media’ to ‘adding media to AI’

Beyond newsroom workflows, Eeman argued that AI may fundamentally reshape how audiences interact with news and information.

“We are moving from adding AI to media to adding media to AI,” he said. “For years we asked how AI could support journalism. Increasingly the question is how journalism fits into AI systems that people use as their primary interface for information.”

In this emerging environment, he suggested that conversational assistants and recommendation systems could become the first point of contact between audiences and information.

Rather than visiting individual websites or apps, users increasingly interact with AI systems that retrieve and summarise information from multiple sources.

Within a single interface, a user might ask for travel recommendations, request a playlist, check the weather, or ask questions about current events without leaving the conversation. As these ecosystems expand across browsers, devices, and operating systems, they may increasingly mediate how news is discovered and consumed.

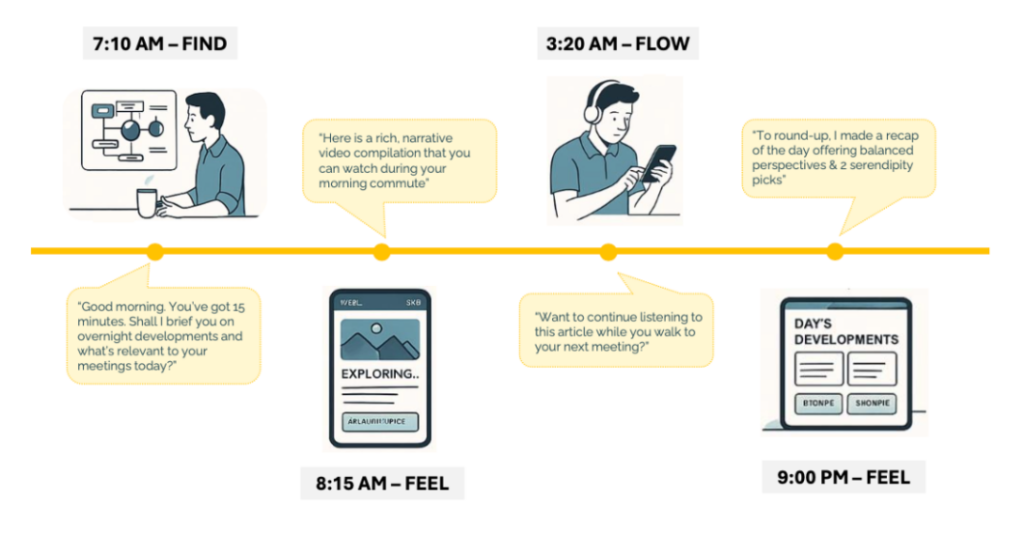

He described this shift through three dynamics – finding, feeling, and flowing.

Finding refers to AI systems surfacing information before users actively search for it. Feeling describes content that adapts to a user’s context or preferences. Flowing refers to information moving seamlessly across devices and formats, such as starting an interaction on a phone and continuing it through a smart speaker or television.

Adapting to this environment may require publishers to rethink how journalism is structured.

Eeman proposed that, instead of existing only as a single finished article, reporting could be broken into smaller modular components – sometimes described as “news atoms” – that can be assembled into summaries, audio briefings, or deeper explanations depending on how users request information.

AI interfaces are reshaping the publisher business model

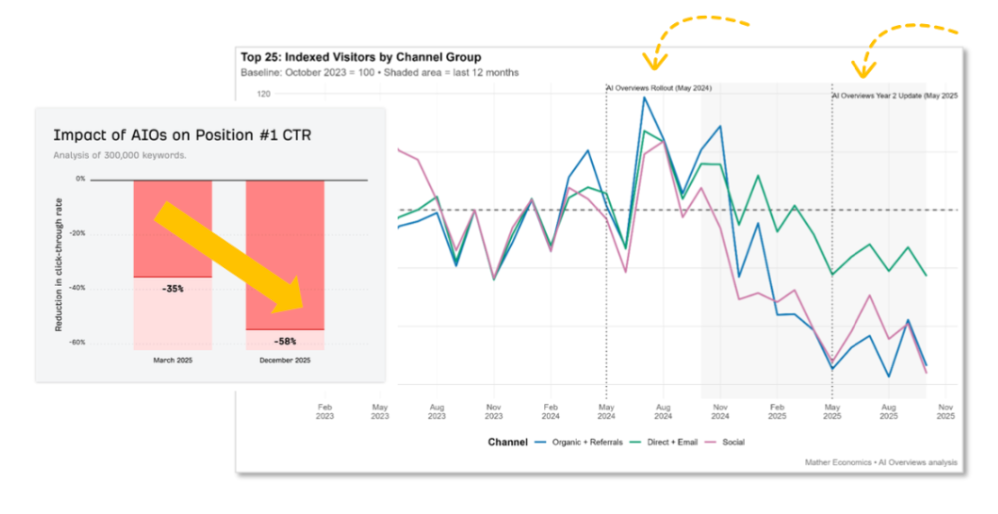

These changes are also beginning to affect the economic foundations of digital publishing.

“The value chain – create content, people find it, they click, you monetise – is starting to break down,” he said. “When AI systems provide direct answers, fewer users necessarily click through to the original source.”

One analysis of 300 000 search keywords found that when AI-generated answers appear in search results, click-through rates for the top positions can drop by as much as 58 percent.

As discovery shifts toward AI interfaces, publishers face new decisions about how their content is accessed and used, Eeman noted.

“Some organisations have moved to block automated crawlers through services such as Cloudflare, while others are exploring structured ways for AI systems to access journalism through emerging protocols designed for conversational interfaces,” he added.

The economic model remains unsettled. Technology companies often seek broad access to web content, while publishers argue for compensation, attribution, and greater control over how their material is used.

Some news organisations are already experimenting with alternative approaches. The Associated Press, for example, is exploring how parts of its archive can be structured as data products that AI systems and other organisations can licence for verified information.

Eeman said different strategies may emerge depending on the type of publisher.

“Large national outlets may have leverage to negotiate partnerships with technology companies, while local publishers can focus on original reporting that AI systems cannot easily replicate. Niche publishers, meanwhile, may benefit from building direct relationships with specialised audiences.”

Across the industry, he said, several principles should guide how journalism interacts with AI systems.

“There is no content without consent,” Eeman said. “There needs to be fair compensation for the use of journalistic work. And accuracy and attribution must remain central in every AI-generated answer.”

A media ecosystem built around those principles, he added, would ultimately benefit both publishers and the AI systems that increasingly rely on reliable information.

This story was first published by the World Association of News Publishers (WAN_IFRA). Access our latest report on AI (free for members).

Neha Gupta is research editor at WAN-IFRA.