The release of the first findings of the Publisher Research Council’s Publisher Audience Measure Survey (PAMS) research were delayed by two months so member concerns could be addressed.

Nine concerns arose, which the PRC addressed completely, before the PAMS 2017 data was released to agencies.

“During the two-month period, the PRC and Nielsen did a series of validations and analysis that the members had requested,” explains Peter Langschmidt, consultant to the PRC. “We are the servant of our members, so if a member has a concern we have to address it,” he adds.

The concerns

One of the queries related to why the ratio between high frequency readers (HFR) and average issue readers (AIR) was so low in PAMS. “We analysed 20 years of AMPS and PAMS figures and we looked at the results… The relationship between HFR and AIR has been in decline for years and is happening all around the world, as confirmed by Nielsen, our research partner, whose Watch business is in 40 markets globally,” comments Langschmidt, explaining that the rise of online reading means people don’t buy newspapers as regularly as they used to.

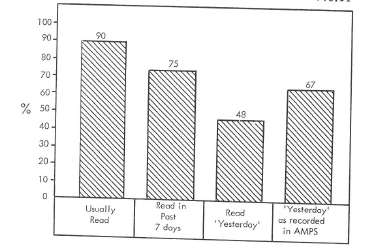

The other major change is in the wording of the reading frequency question in PAMS. “In PAMS we ask, ‘How many issues do you normally read?’ In AMPS we used to ask, ‘How many of the last six issues have you read within the (last six issue period)?’; this is a far more defined period and question”, Langschmidt says. So, the ratio between the new “normally” and the old “six issue periods” question will, by definition, be much lower. This ‘normally read’ question was used as a status deflating question; it gives the respondent a chance to “impress” the interviewer. As can be seen from the comparative methodologies tests conducted by MRA in the 1980s, this has the effect of reducing reading claims by around 20%.

EFFECT OF STATUS DEFLATING QUESTION

Source: Reliability of Response in Readership Research

One of the objectives in PAMS was to bring down the Readers Per Copy, (RPC) and therefore it introduced this best practice, “normally” read frequency question in PAMS.

The utilisation of the flooding methodology (which interviews more than one person in 70% of households) was also raised as a concern, with the question being asked as to whether this introduces bias. “In our RFP and tender process, every one of the global research companies had no problem with utilising flooding, and it has been used in Radio and TV studies for many years. While we introduced flooding primarily as a means of saving 58% of the costs of conventional studies, it delivered another great benefit. Flooded respondents claimed reading was a full 9% lower than our primary respondent readers. So, we can comfortably say there’s no bias,” responds Langschmidt.

Another concern related to the 12-month filter question asked in the PAMS survey, vs the six-month filter used in AMPS. This new filter period had been discussed and agreed by all members during the tender and questionnaire approval process, explains Langschmidt.

“Nielsen are the biggest market research company in the world and their Watch business is present in 40 markets and analysed all 87 world-wide research studies utilising PDRF data. Twelve-month is the most popular filter period in 45% of the 78 countries, only 8% use a six-month filter,” he says.

“In addition to 12 months being the most popular, we also moved to 12 months to match the Establishment Survey and the periods used for other media types to enable us to reach comparisons; 12 months is also far easier for respondents to remember: Erhard Meier, conducted an audit for the SAARF Print Council a few years back and said his preference was for a common 12M filter. At any date of interview, the past 12 months is easier to think about than the past six months! It is less taxing to respondents,” Langschmidt says.

He says many of the queries were based on what used to happpen with AMPS. “Just because we did it in AMPS doesn’t mean it was right. We took a clean sheet of paper when we started the PRC and we had a very rigorous RFP and tender process, and all the companies did a complete re-evaluation of methodologies and looked at best practice worldwide,” says Langschmidt.

“In AMPS, we used to use a recency methodology called FRIPI (First Reading within Issue Period). We consciously did away with FRIPI because there’s no market in the world that uses it anymore. We were the only ones using it. When we analysed the effects of not using it in AMPS 2015, we saw that while it adds tremendous complexity, it only reduces readership by a mere 4% on average, and for many titles, had no effect, whatsoever.”

A focus on Core Readers

One of the PRC’s objectives was to bring down readers per copy “to more believable levels”.

“We did that with daily newspapers, but with monthly magazines, we’ve still have a problem … The recency method doesn’t work as well for monthlies as it does for dailies and weeklies, since it is way harder to remember if you read a magazine within the last month than if you read a newspaper yesterday,” he says.

Langschmidt created a new FOR (Frequency Over Recency) called Core Readers, overlaying the frequency question probability on top of the Recency average issue reader.

For example, for daily newspapers, a five-issue frequency scale as there are five issues published from Monday to Friday. The individual issue probability frequency is applied to the standard Recency AIR to arrive at Core Readers.

DAILY NEWSPAPER CORE READER CALCULATION EXAMPLE

| FREQUENCY GROUP | PROBABILITY (A) | AIR READERS (B) | CORE READERS (AxB) |

| 1 OUT OF 5 ISSUES | 0.2 | 120 | 24 |

| 2 OUT OF 5 ISSUES | 0.4 | 115 | 46 |

| 3 OUT OF 5 ISSUES | 0.6 | 95 | 57 |

| 4 OUT OF 5 ISSUES | 0.8 | 158 | 126 |

| 5 OUT OF 5 ISSUES | 1.0 | 212 | 212 |

| TOTAL READERS | 700 | 465 | |

The PAMS research doesn’t just measure the audiences of print newspapers and magazines, digital is also included. There is no average issue metric in online, so past 7 days or past 4 weeks metrics are included.

Independent verification from multiple sources

Since the decline of SAARF, there’s a perception in the industry that the new media owned JICS like the PRC and the BRC have a vested interest and are “marking their own homework”. But this could not be further from the truth, since the new media research has even more checks and balances than there were in the past.

All JIC research is validated by members – who are after all fierce competitors, as well as overseas auditors.

Notwithstanding this the PRC has established a further independent, unbiased entity, the Technical Oversight Committee (TOC), comprising global media and research experts and local research expert’s media doyens to represent users of the PAMS data.

“Every item in this audit checklist, was answered by the PRC and Nielsen to the satisfaction of the majority of the members and validated by the independent TOC.

“We are confident that PAMS represents an accurate reflection of the reading landscape in South Africa. Being based on respondent recall of a masthead combined with memory decay it is not perfect, but this claimed recency method is the most used and most cost-effective readership methodology used globally. This is proved by the fact that a non-existent title, Tshepo that we included to validate our methodology, was only ‘read’ by six out of over 17 000 respondents!” says Langschmidt.

The PRC and Nielsen, the largest market research company in the world, stand united in the validity and representivity of PAMS. The methodology is used and accepted in many markets and has been verified by the TOC.

“PAMS is state of the art multiple platform reading research, utilising global best practice and multi country testing combined with many years of trial and experience. We are confident that the reading results can be used by both the publishers and the agencies and advertisers as a valid trading currency” says Terry Murphy, local head of Nielsen watch who conducted the PAMs survey on behalf of the PRC.

Michael Bratt is a multimedia journalist at Wag the Dog, publishers of The Media Online and The Media. Follow him on Twitter @MichaelBratt8